Visuomotor Experiment Framework

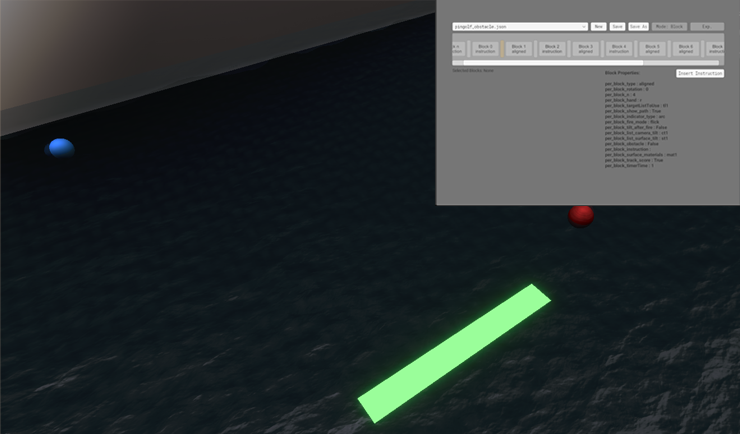

A Unity-based platform for designing and running visuomotor learning experiments across VR headsets and desktop screens.

Overview

The Visuomotor Experiment Framework (VEF) is a Unity (C#) platform built to streamline the design and deployment of motor learning experiments in immersive and traditional environments. It supports multi-platform deployment — head-mounted VR displays (HMDs) and desktop screens — allowing the same experiment logic to run across hardware (mice, visually tracked objects, and robitic arms) without duplication of effort.

The framework was developed to address a real bottleneck in our lab: setting up a new experiment meant weeks of Unity boilerplate before any research task could be written. VEF abstracts that away, exposing a data-driven API for defining trial sequences, visual feedback conditions, and hardware configurations.

Example Tasks

The videos below demonstrate four different task types running inside the framework — ranging from simple reaching tasks to gaze-based localization of unseen limbs.

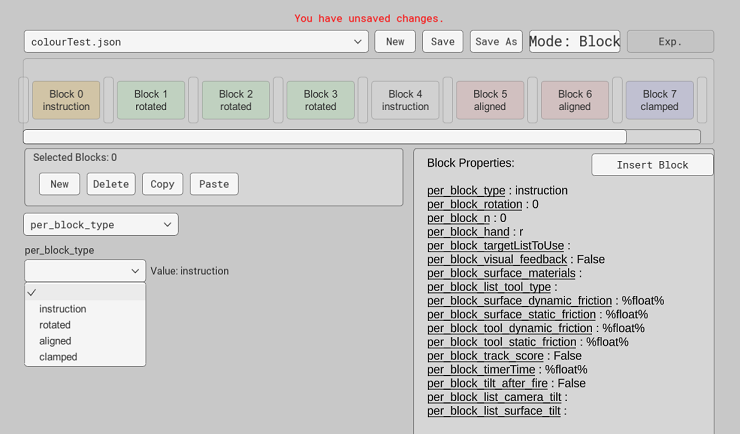

JSON Configuration Tool

Configuring a new experiment in VEF involves writing a JSON file that specifies task parameters, trial sequences, and feedback conditions. To make this accessible to researchers without programming backgrounds, we built a companion GUI tool — the VEF JSON Config — that generates these files visually.

The tool was designed around a human-centered workflow: a researcher can storyboard a session, configure each trial block, and export valid configs without touching the Unity editor directly. This significantly reduced setup time and errors during piloting.